Article

and functional learning

Yuchi Huo1,2,3,

Hujun Bao1,2,

Yifan Peng4,

Chen Gao2,

Wei Hua2,

Qing Yang2,

Haifeng Li2,

Rui Wang1, and

Sung-Eui Yoon3

1State Key Lab of CAD&CG, Zhejiang University, Hangzhou, China

2Zhejiang Lab, Hangzhou, China

3Korea Advanced Institute of Science and Technology, Deajeon, South Korea

4The University of Hong Kong, Hong Kong, SAR, China

[Paper]

Abstract

This research proposes a deep-learning paradigm, termed functional learning (FL), to physically train a loose neuron array, a group of non-handcrafted, non-differentiable, and loosely connected physical neurons whose connections and gradients are beyond explicit expression. The paradigm targets training non-differentiable hardware, and therefore solves many interdisciplinary challenges at once: the precise modeling and control of high-dimensional systems, the on-site calibration of multimodal hardware imperfectness, and the end-to-end training of non-differentiable and modeless physical neurons through implicit gradient propagation. It offers a methodology to build hardware without handcrafted design, strict fabrication, and precise assembling, thus forging paths for hardware design, chip manufacturing, physical neuron training, and system control. In addition, the functional learning paradigm is numerically and physically verified with an original light field neural network (LFNN). It realizes a programmable incoherent optical neural network, a well-known challenge that delivers light-speed, high-bandwidth, and power-efficient neural network inference via processing parallel visible light signals in the free space. As a promising supplement to existing power- and bandwidth-constrained digital neural networks, light field neural network has various potential applications: brain-inspired optical computation, high-bandwidth power-efficient neural network inference, and light-speed programmable lens/displays/detectors that operate in visible light.

Overview

Functional learning (FL) paradigm

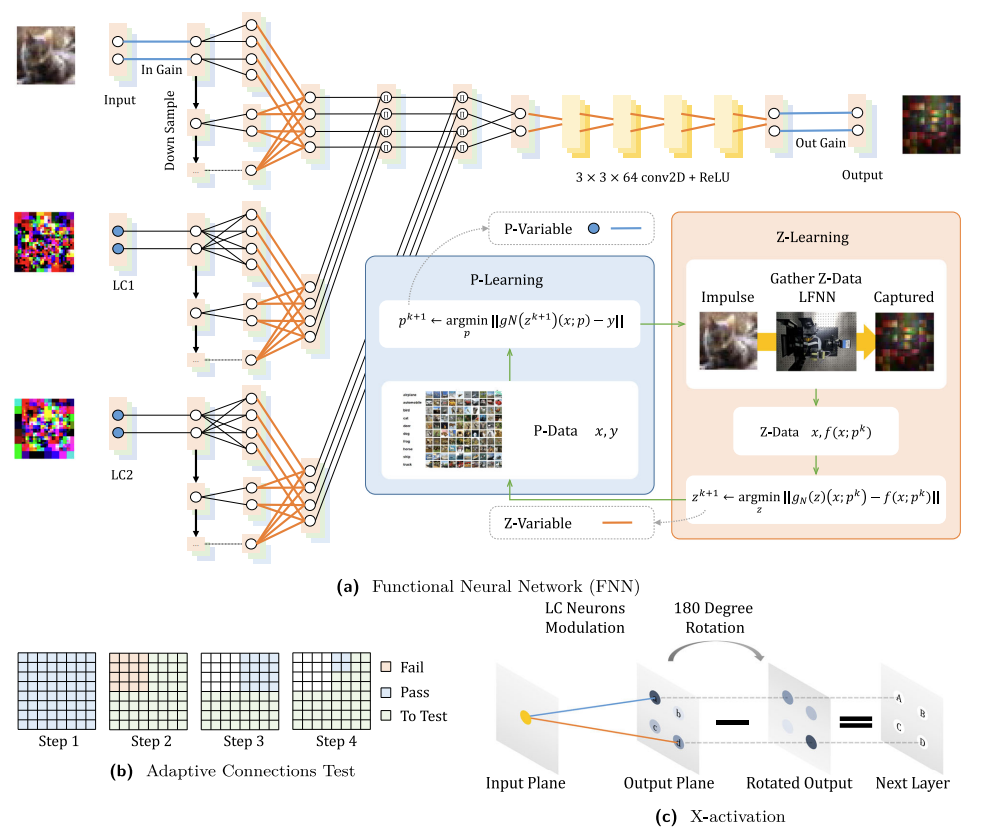

Fig. 3 | Functional learning (FL) paradigm. This figure illustrates various details of the network design and training process. (a) The first part of the FNN is a physicallyinspired functional basis block before the convolutional neural network (CNN) layers. (b) Illustration of the process of testing LC neuron connections by the quad-tree searching method. (c) By training the LC neurons' parameters, the input plane's neuron can either activate or deactivate a neuron in the next layer. More detailed descriptions are provided in the paper.

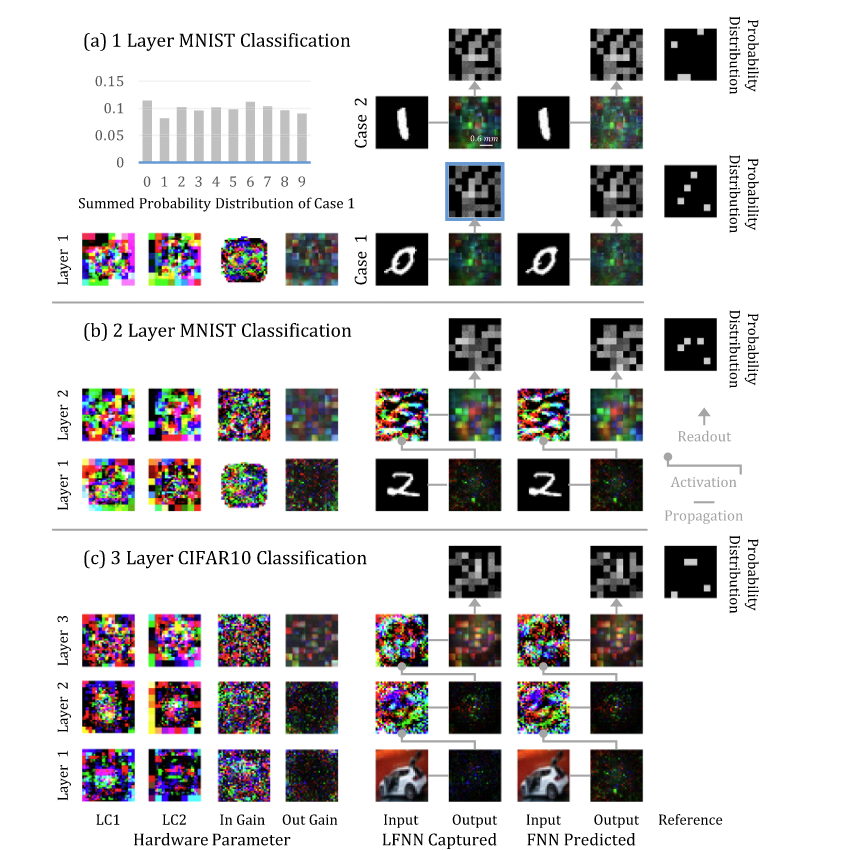

Classification experiment

Fig. 4 | Classification experiment. We use the MNIST and CIFAR10 data sets to test one to three layers of the LFNN and visualize the results in (a), (b) and (c), respectively. Results on Object recognition and Depth estimation can be found in the paper.

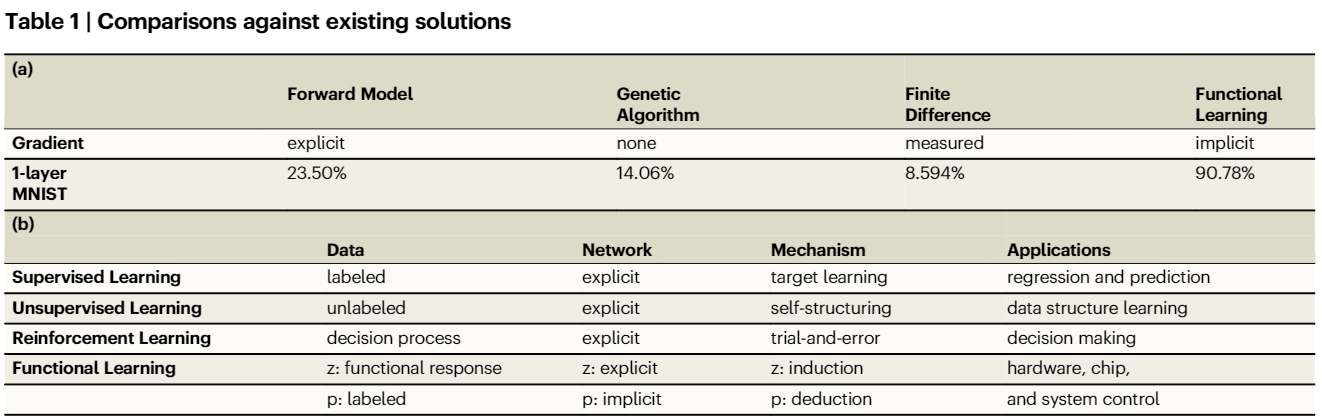

(a) Classification accuracy of training the 1-layer LFNN in the MNIST dataset using different training paradigms. The 1-layer LFNN consists of 2 LC panels. Genetic algorithm and finite difference are measured using approximately equal training time of functional learning. The forward model is measured using an equal number of epochs of functional learning. Detailed discussion is in the supplementary document. (b) Different intuitions of existing learning paradigms.

Entire experiments including object recognition and depth estimation can be found in the paper.

|